You might as well nail Jell-O to a tree! That’s just about what it feels like to read the draft of the Federal Justice Ministry’s Netzwerkdurchsetzungsgesetz. That means: Network Enforcement Act. Many in Germany have long hoped for a better way to combat illegal speech like racial incitement, hate speech, and threats, that such statements be more quickly recognized and dealt with. This is legitimate: those on the internet are not exempt from the laws of the land. So long as Germany considers certain speech—incitement, threats, Holocaust denial—to be illegal, those laws will apply everywhere, and must be followed. We cannot stop people from spreading hate online, but as soon as it crosses the line into illegality it must be responded to.

That the FJM wants to respond to hate speech is wonderful. And every ministry can only act within its bounds—social programs belong to the Social Ministry, youth work belongs to the Youth Ministry, and tanks to the Defense Ministry. Laws shape how and to what extent the state can carry out justice. The published draft of the Network Enforcement Act shows readiness to act and has been lauded for this. But that readiness does not suffice to write good, intelligent laws.

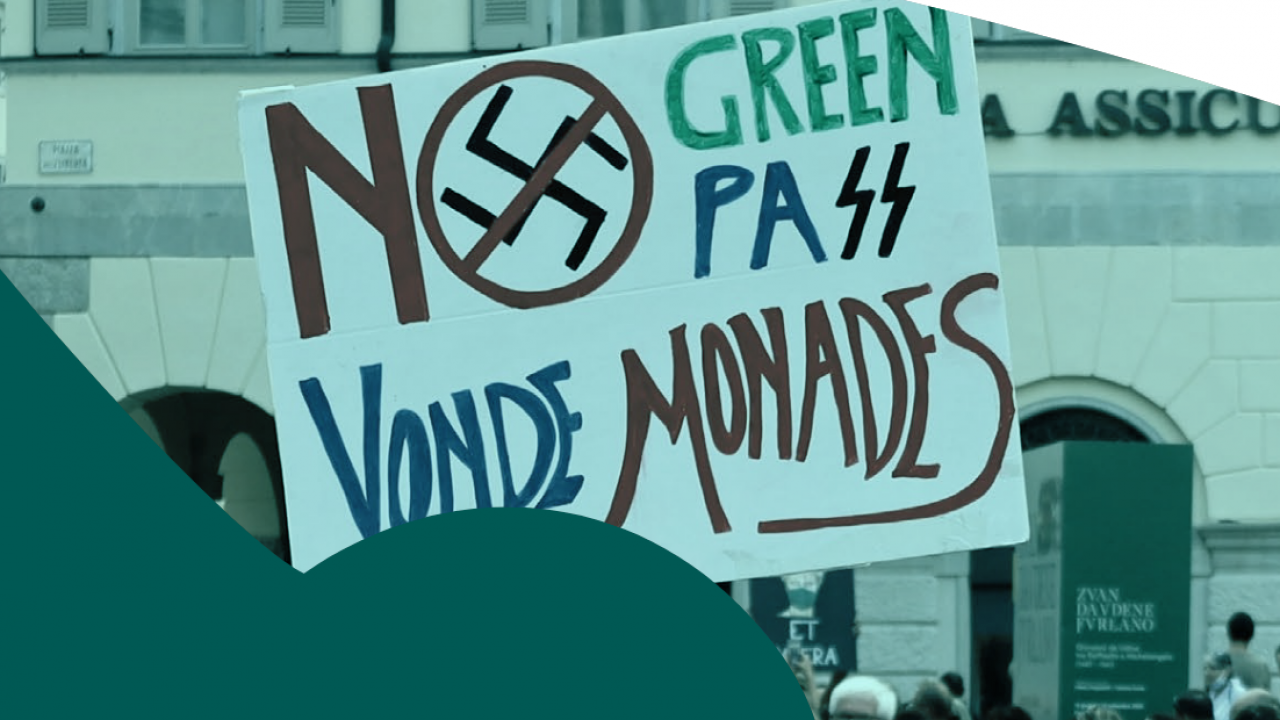

The Act’s first and biggest weakness is that it focuses solely on the administrators and owners of social networks. Social networks like Facebook are therefore being handled like newspapers, which have ultimate responsibility for what they bring to print. Social networks, in contrast, merely reflect what the users—our society—say and do. We cannot make Facebook responsible for racism, anti-Semitism, or the glorification of the Nazi regime on its network. It is the Germans on Facebook who put those things online. Anyone who wants to stop the spread of those poisonous ideologies must confront both the network itself—Facebook in this example—AND the society it reflects. To confront the one without the other is about as sensible as holding tomato farmers responsible when politicians get pelted with rotten fruit. The Justice Ministry can do better: setting our sights on the networks’ administrators, and exclusively on them, gives the impression of shifting responsibility for a societal problem to someone else.

The second problem follows from the first. Under the NEA, social networks would be fined when “obviously” illegal content goes undeleted for more than 24 hours, or a week in more complicated cases. That’s supposed to be Facebook’s decision? What in the world is “obviously” illegal? If I went before a judge and claimed that something was just “obviously” illegal, I’d be laughed out of court. Lawyers should protest—after all, determining if expression is illegal or not is their daily bread. The NEA would make the state into plaintiff and Google, Facebook, and their like—menaced with fines from the Justice Ministry—into judge, jury and executioner. Imagine: a user reports some post as “obviously” illegal to administrators. They, fearing fines should they not act quickly, make a decision and delete the post. But what if they’re wrong? What would the state do then? Who retains oversight of the whole process? Will the Justice Ministry retain a small army of clerks to check if social networks delete posts fast enough? This is an unavoidable transfer of jurisdiction into private hands. And it is a contradiction in itself: on one hand, it is claimed that the social networks, that is, the corporations behind them, have too much power. But on the other hand, this law would transfer near-total responsibility to them!

The third point is the expanded list of content facing deletion. You might not want to believe it, but there’s more on the list than incitement to violence or hate speech. Alongside these known crimes stands: preparation of an act of violence against the state; complicity in an act of violence against the state; unconstitutional denigration of a constitutional body; treasonable counterfeiting and the building of terrorist groups. Remember: the social networks themselves are the ones tasked with deciding and deleting such things. This would be the limiting of free speech under the guise of action against hate speech online. I’m surprised that it’s even constitutional. Its use against social media would open the door to abuse and malfeasance: practically any statement in any political direction could become criminalized. To avoid millions in fines, what would the social networks do, just delete everything that’s reported? That’s hardly an open society and open debate! The idea that only the “evil people and liars” would be affected, sparing the true and the good, is not suited for designing fair and flexible laws.

It’s ultimately a fool’s errand. The law has good intentions. It would help create transparency, as Germany’s social networks have always left unclear how many are employed (and how well-trained they are) dealing with reports of illegal hate speech. It would be a great relief to have a partner responsible for such things in Germany, which does not exist at all social networks. The social networks themselves naturally have some responsibility for hate online, which they fulfill now much better than they used to—there’s no question of that. But such a pointless law simply won’t do.

Would should we do instead? How about this: openness and investment between social networks, society itself and the Justice Ministry, improving their ability to meet the challenges, both legal and humanistic, of our global, digital age. How about a societal debate that lives up to its name.